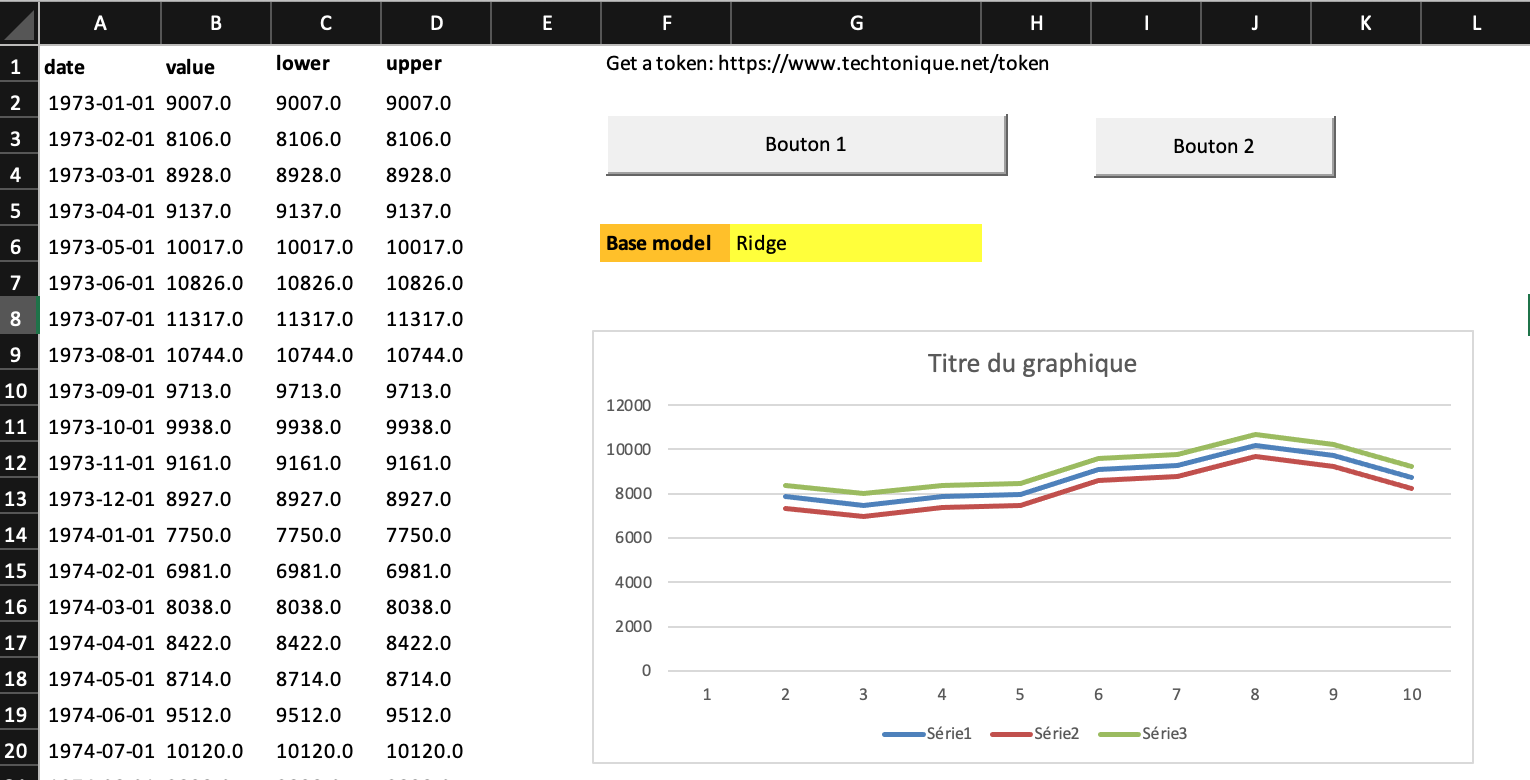

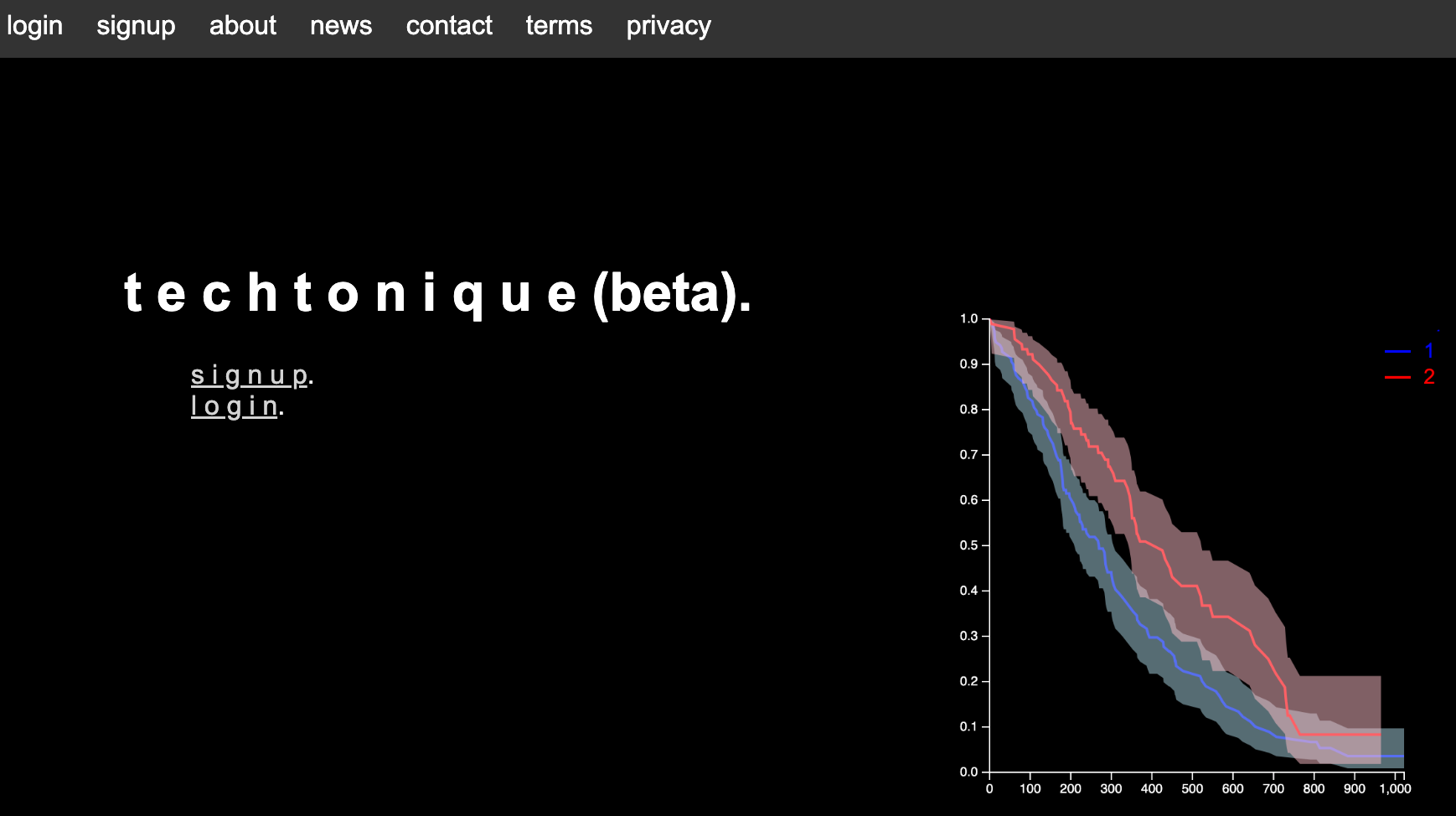

As a reminder from last week, you can run R or Python code interactively in your browser, on www.techtonique.net.

Techtonique web app is a tool designed to help you make informed, data-driven decisions using Mathematics, Statistics, Machine Learning, and Data Visualization. As of September 2024, the tool is in its beta phase (subject to crashes) and will remain completely free to use until December 24, 2024. After registering, you will receive an email. CHECK THE SPAMS. A few selected users will be contacted directly for feedback, but you can also send yours.

The tool is built on Techtonique and the powerful Python ecosystem. At the moment, it focuses on small datasets, with a limit of 1MB per input. Both clickable web interfaces and Application Programming Interfaces (APIs, see below) are available.

Currently, the available functionalities include:

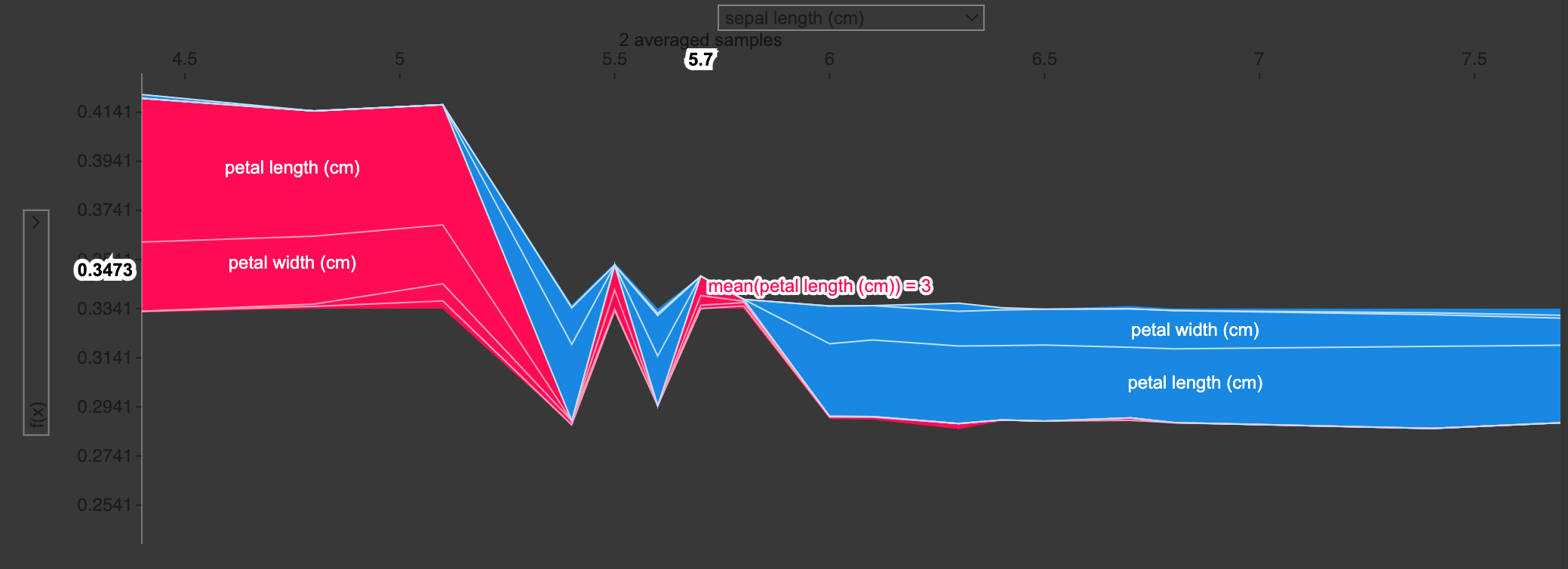

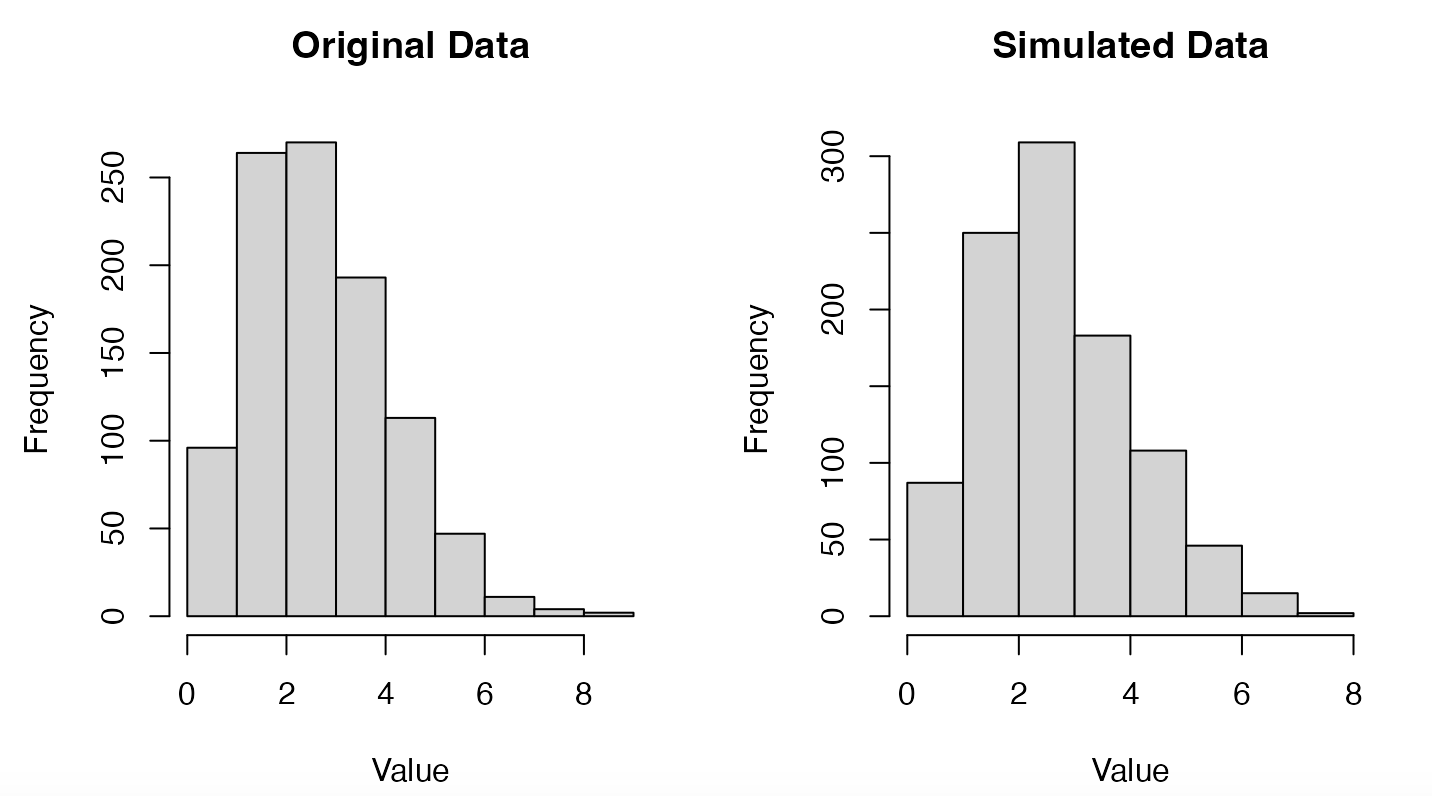

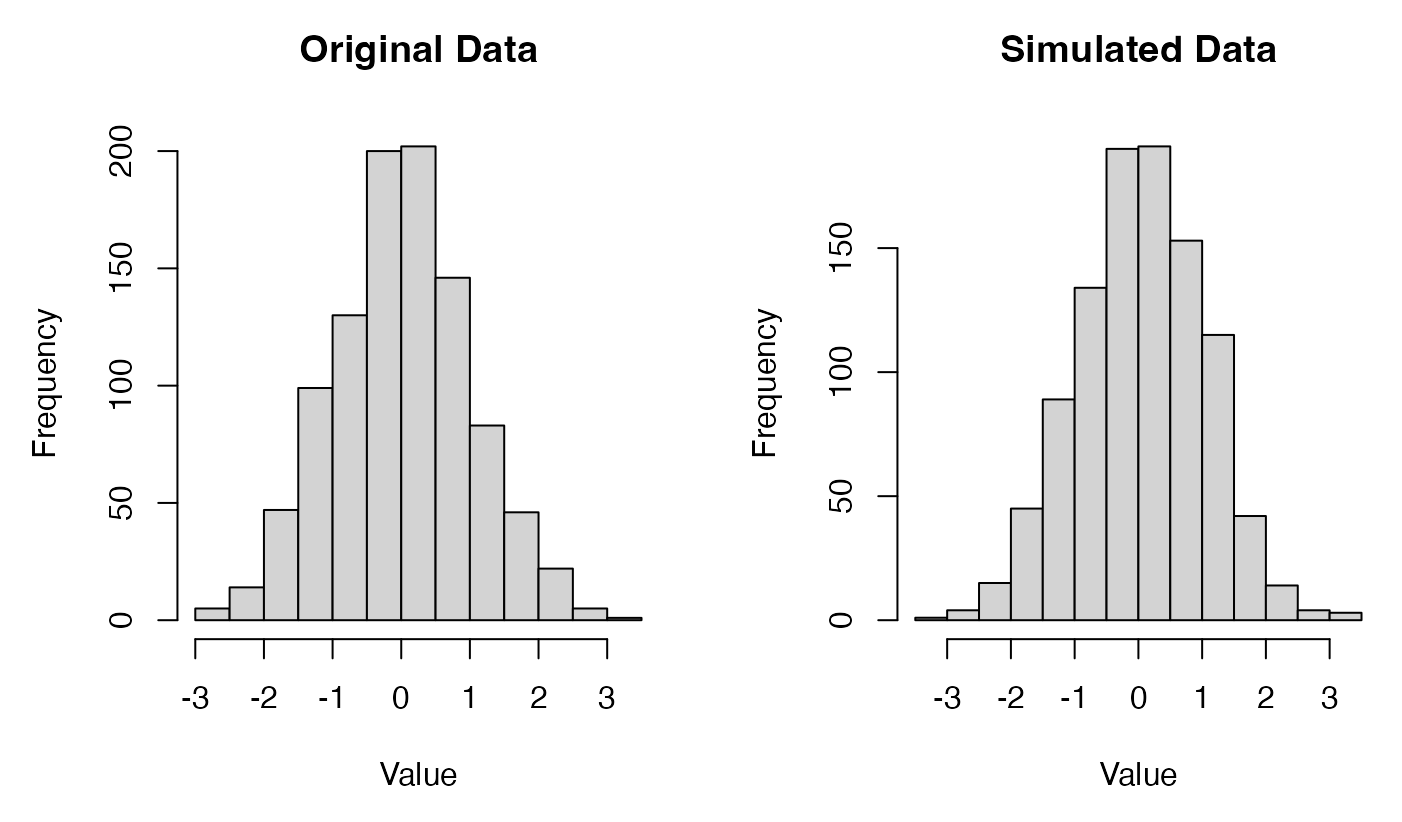

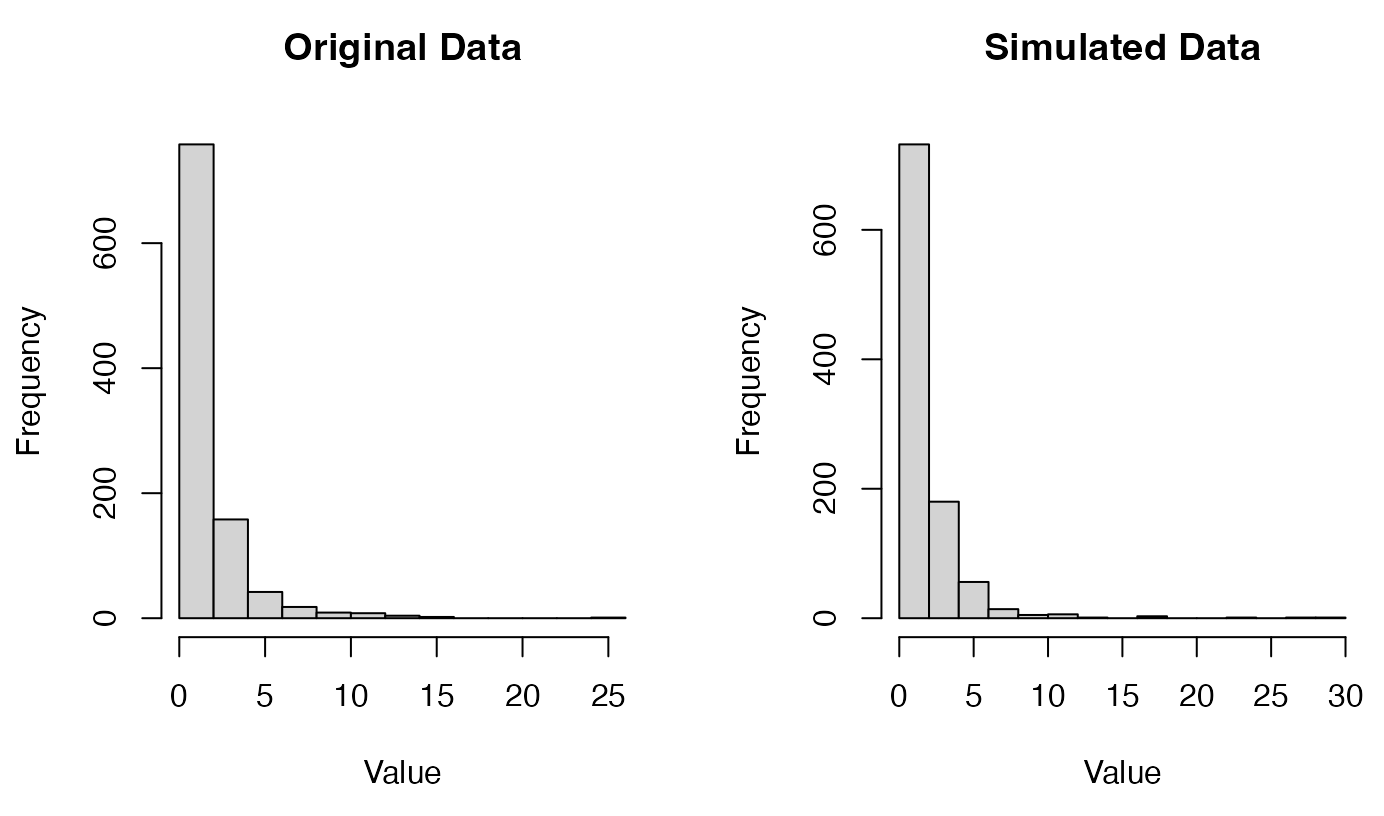

- Data visualization. Example: Which variables are correlated, and to what extent?

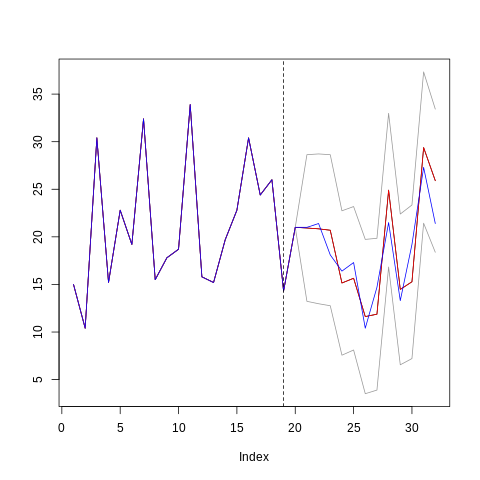

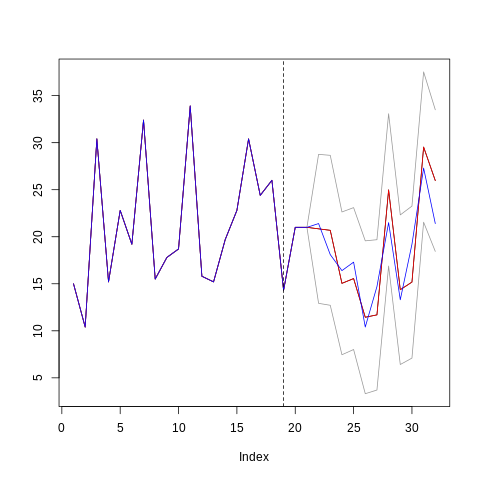

- Probabilistic forecasting. Example: What are my projected sales for next year, including lower and upper bounds?

- Machine Learning (regression or classification) for tabular datasets. Example: What is the price range of an apartment based on its age and number of rooms?

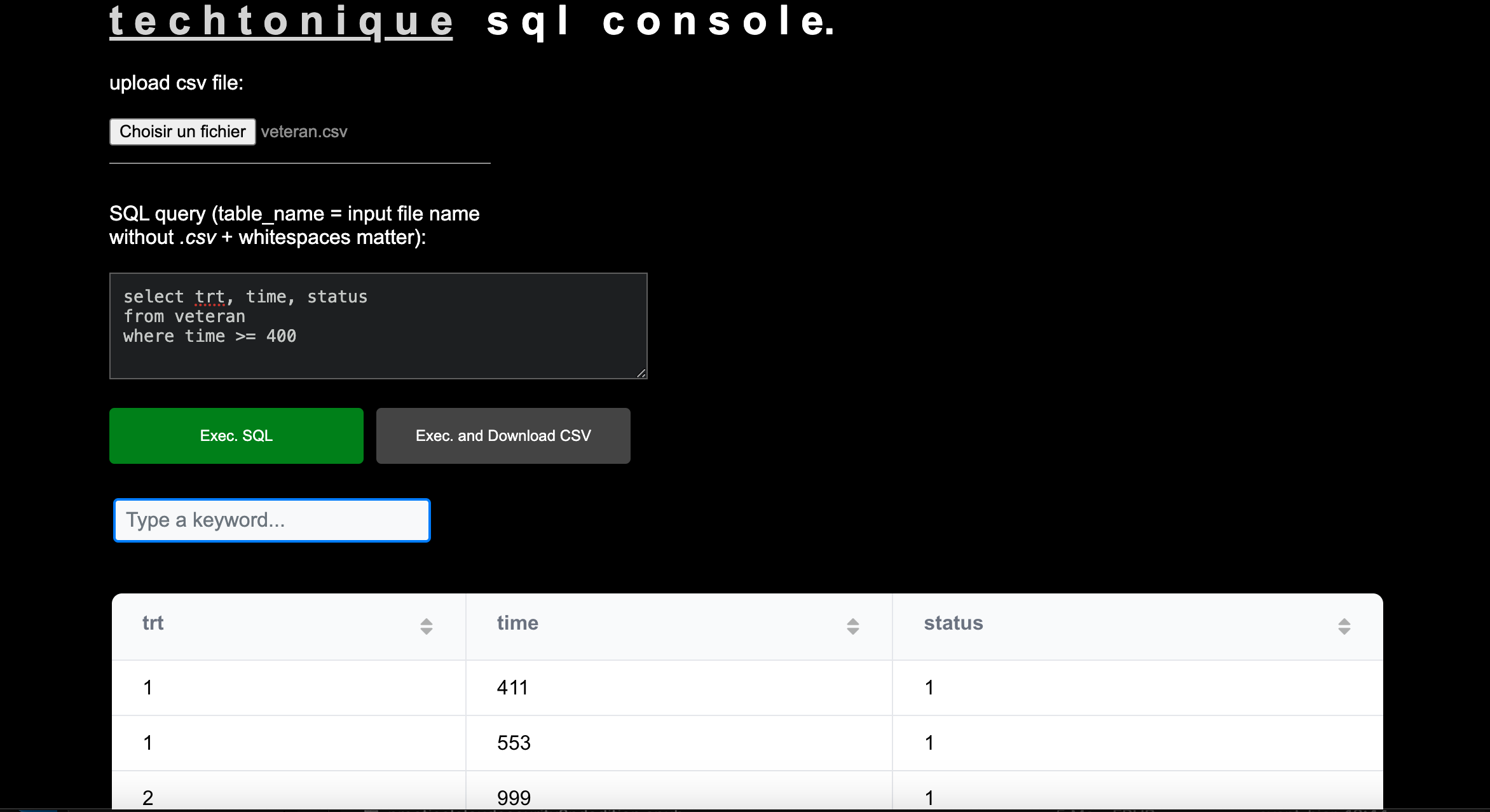

- Survival analysis, analyzing time-to-event data. Example: How long might a patient live after being diagnosed with Hodgkin’s lymphoma (cancer), and how accurate is this prediction?

- Reserving based on insurance claims data. Example: How much should I set aside today to cover potential accidents that may occur in the next few years?

As mentioned earlier, this tool includes both clickable web interfaces and Application Programming Interfaces (APIs).

APIs allow you to send requests from your computer to perform specific tasks on given resources. APIs are programming language-agnostic (supporting Python, R, JavaScript, etc.), relatively fast, and require no additional package installation before use. This means you can keep using your preferred programming language or legacy code/tool, as long as it can speak to the internet. What are requests and resources?

In Techtonique/APIs, resources are Statistical/Machine Learning (ML) model predictions or forecasts.

A common type of request might be to obtain sales, weather, or revenue forecasts for the next five weeks. In general, requests for tasks are short, typically involving a verb and a URL path — which leads to a response.

Below is an example. In this case, the resource we want to manage is a list of users.

- Request type (verb): GET

- URL Path:

http://users| Endpoint: users | API Response: Displays a list of all users - URL Path:

http://users/:id| Endpoint: users/:id | API Response: Displays a specific user

- Request type (verb): POST

- URL Path:

http://users| Endpoint: users | API Response: Creates a new user

- Request type (verb): PUT

- URL Path:

http://users/:id| Endpoint: users/:id | API Response: Updates a specific user

- Request type (verb): DELETE

- URL Path:

http://users/:id| Endpoint: users/:id | API Response: Deletes a specific user

In Techtonique/APIs, a typical resource endpoint would be /MLmodel. Since the resources are predefined and do not need to be updated (PUT) or deleted (DELETE), every request will be a POST request to a /MLmodel, with additional parameters for the ML model.

After reading this, you can proceed to the /howtoapi page.